Differential operator

In mathematics, a differential operator is an operator defined as a function of the differentiation operator. It is helpful, as a matter of notation first, to consider differentiation as an abstract operation, accepting a function and returning another (in the style of a higher-order function in computer science).

This article considers only linear operators, even though the Schwarzian derivative is a prominent example of a non-linear operator.

Contents |

Notations

The most commonly used differential operator is the action of taking the derivative itself. Common notations for this operator include:

where the variable with respect to which one is differentiating is clear, and

where the variable with respect to which one is differentiating is clear, and

where the variable is declared explicitly.

where the variable is declared explicitly.

-

, is an alternative notation.

, is an alternative notation.

First derivatives are signified as above, but when taking higher, nth derivatives, the following alterations are useful:

For a function f of an argument x, the derivative operator is sometimes given as either of the following:

The D notation's use and creation is credited to Oliver Heaviside, who considered differential operators of the form

in his study of differential equations.

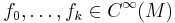

One of the most frequently seen differential operators is the Laplacian operator, defined by

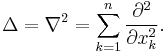

Another differential operator is the Θ operator, or theta operator, defined by[1]

This is sometimes also called the homogeneity operator, because its eigenfunctions are the monomials in z:

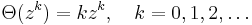

In n variables the homogeneity operator is given by

As in one variable, the eigenspaces of Θ are the spaces of homogeneous polynomials.

Adjoint of an operator

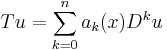

Given a linear differential operator T

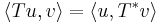

the adjoint of this operator is defined as the operator  such that

such that

where the notation  is used for the scalar product or inner product. This definition therefore depends on the definition of the scalar product.

is used for the scalar product or inner product. This definition therefore depends on the definition of the scalar product.

Formal adjoint in one variable

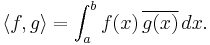

In the functional space of square integrable functions, the scalar product is defined by

If one moreover adds the condition that f or g vanishes for  and

and  , one can also define the adjoint of T by

, one can also define the adjoint of T by

This formula does not explicitly depend on the definition of the scalar product. It is therefore sometimes chosen as a definition of the adjoint operator. When  is defined according to this formula, it is called the formal adjoint of T.

is defined according to this formula, it is called the formal adjoint of T.

A (formally) self-adjoint operator is an operator equal to its own (formal) adjoint.

Several variables

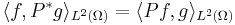

If Ω is a domain in Rn, and P a differential operator on Ω, then the adjoint of P is defined in L2(Ω) by duality in the analogous manner:

for all smooth L2 functions f, g. Since smooth functions are dense in L2, this defines the adjoint on a dense subset of L2: P* is a densely-defined operator.

Example

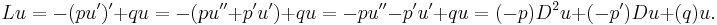

The Sturm–Liouville operator is a well-known example of a formal self-adjoint operator. This second-order linear differential operator L can be written in the form

This property can be proven using the formal adjoint definition above.

This operator is central to Sturm–Liouville theory where the eigenfunctions (analogues to eigenvectors) of this operator are considered.

Properties of differential operators

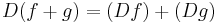

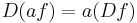

Differentiation is linear, i.e.,

where f and g are functions, and a is a constant.

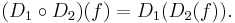

Any polynomial in D with function coefficients is also a differential operator. We may also compose differential operators by the rule

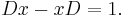

Some care is then required: firstly any function coefficients in the operator D2 must be differentiable as many times as the application of D1 requires. To get a ring of such operators we must assume derivatives of all orders of the coefficients used. Secondly, this ring will not be commutative: an operator gD isn't the same in general as Dg. In fact we have for example the relation basic in quantum mechanics:

The subring of operators that are polynomials in D with constant coefficients is, by contrast, commutative. It can be characterised another way: it consists of the translation-invariant operators.

The differential operators also obey the shift theorem.

Several variables

The same constructions can be carried out with partial derivatives, differentiation with respect to different variables giving rise to operators that commute (see symmetry of second derivatives).

Coordinate-independent description

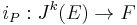

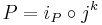

In differential geometry and algebraic geometry it is often convenient to have a coordinate-independent description of differential operators between two vector bundles. Let E and F be two vector bundles over a differentiable manifold M. An R-linear mapping of sections P : Γ(E) → Γ(F) is said to be a kth-order linear differential operator if it factors through the jet bundle Jk(E). In other words, there exists a linear mapping of vector bundles

such that

where jk: Γ(E) → Γ(Jk(E)) is the prolongation that associates to any section of E its k-jet.

This just means that for a given sections s of E, the value of P(s) at a point x ∈ M is fully determined by the kth-order infinitesimal behavior of s in x. In particular this implies that P(s)(x) is determined by the germ of s in x, which is expressed by saying that differential operators are local. A foundational result is the Peetre theorem showing that the converse is also true: any (linear) local operator is differential.

Relation to commutative algebra

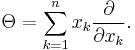

An equivalent, but purely algebraic description of linear differential operators is as follows: an R-linear map P is a kth-order linear differential operator, if for any k + 1 smooth functions  we have

we have

Here the bracket ![[f,P]:\Gamma(E)\rightarrow \Gamma(F)](/2012-wikipedia_en_all_nopic_01_2012/I/e481065515ffec65f7a9fbfefbc39669.png) is defined as the commutator

is defined as the commutator

This characterization of linear differential operators shows that they are particular mappings between modules over a commutative algebra, allowing the concept to be seen as a part of commutative algebra.

Examples

- In applications to the physical sciences, operators such as the Laplace operator play a major role in setting up and solving partial differential equations.

- In differential topology the exterior derivative and Lie derivative operators have intrinsic meaning.

- In abstract algebra, the concept of a derivation allows for generalizations of differential operators which do not require the use of calculus. Frequently such generalizations are employed in algebraic geometry and commutative algebra. See also jet (mathematics).

See also

- Difference operator

- Delta operator

- Elliptic operator

- Fractional calculus

- Invariant differential operator

- Differential calculus over commutative algebras

- Lagrangian system

References

- ^ E. W. Weisstein. "Theta Operator". http://mathworld.wolfram.com/ThetaOperator.html. Retrieved 2009-06-12.

![[f(x)]'\,\!](/2012-wikipedia_en_all_nopic_01_2012/I/277409f676982d122fabc2809b14321f.png)

![T^*u = \sum_{k=0}^n (-1)^k D^k [a_k(x)u].\,](/2012-wikipedia_en_all_nopic_01_2012/I/d063f5581b0460d41ada21eed3566fb8.png)

![\begin{align}

L^*u & {} = (-1)^2 D^2 [(-p)u] %2B (-1)^1 D [(-p')u] %2B (-1)^0 (qu) \\

& {} = -D^2(pu) %2B D(p'u)%2Bqu \\

& {} = -(pu)''%2B(p'u)'%2Bqu \\

& {} = -p''u-2p'u'-pu''%2Bp''u%2Bp'u'%2Bqu \\

& {} = -p'u'-pu''%2Bqu \\

& {} = -(pu')'%2Bqu \\

& {} = Lu

\end{align}](/2012-wikipedia_en_all_nopic_01_2012/I/ec3f04038f69837b599647028181ba75.png)

![[f_k,[f_{k-1},[\cdots[f_0,P]\cdots]]=0.](/2012-wikipedia_en_all_nopic_01_2012/I/f38824edae0cf004f8d3f1e607c3a7dd.png)

=P(f\cdot s)-f\cdot P(s).\,](/2012-wikipedia_en_all_nopic_01_2012/I/4db36d4342db58161485f4391f454260.png)